You should never have to trade privacy for healthcare.

When you send a message in Trellis, it stays inside your private environment.

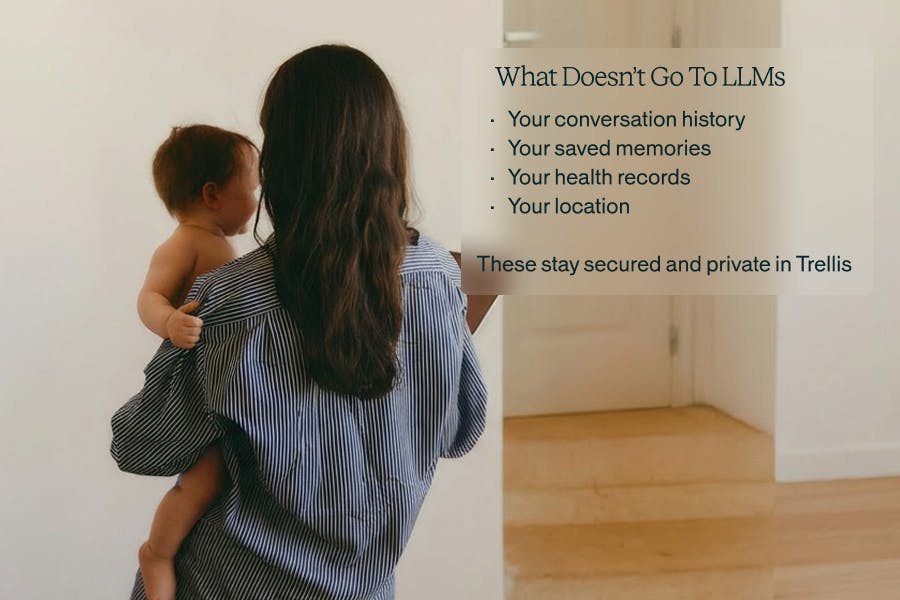

We host dedicated AI systems that can help generate an answer, but they do not know who you are. LLMs cannot keep your history, and cannot build a profile of you. Only your Trellis health record remembers your conversations, so your care can improve without your life becoming a dataset.

Healthcare works only when people can speak honestly.

This page explains how Trellis is built to make that possible.

Technology that protects your story as carefully as it learns it.

WHAT HAPPENS ON TRELLIS STAYS ON TRELLIS

Your health lives with you, not inside a company, not inside an ad network, not inside a shared AI brain.

Here's what happens when you send a message.

Trellis fragmented identity architecture

Your Trellis Intelligence remembers you, but the outside AI never does.

This is Trellis' Unlinkable Query Intelligence (TM) - it’s personal health intelligence with a deliberately fragmented identity system when leveraging LLM models.

- When your question leaves Trellis Intelligence → it’s stripped of identifiers

- There’s no history chain, and it’s mixed with everyone else’s queries

- Your questions don’t follow you. No cross-user or cross-query learning

- Responses return only to your private model, where they gain full context and history

Think of it like paying with cash instead of building an electronic spending profile.

Privacy by design, not just policy

Policies can change with a meeting.

System architecture is harder to change.

Trellis is built so your privacy does not depend on a future executive, investor, or acquisition decision. The protections come from how the system is constructed, not from a promise we can later rewrite.

What is built into the system:

- Messages are encrypted in transit and at rest

- Each user’s health memory and model context is isolated and stored on dedicated hardware

- Your data is siloed and cannot be placed into shared model training pools

- The system runs inside a private, HIPAA-secured environment

- Partner contracts and BAAs prohibit secondary data use

Regulatory and operational safeguards:

- Designed to meet HIPAA, GDPR, and CCPA requirements

- Access controls and audit logs restrict who can view records

- Vendors are contractually limited to performing services for your care only

We cannot silently convert your records into a dataset because the system does not allow it. You should not need legal expertise to understand how your health information is handled. The protections are structural.

Most healthcare pipes are dirty, ours are clean

Modern healthcare is connected through many systems: hospitals, labs, insurers, billing processors, analytics vendors, and apps.

Over time, this infrastructure was built less like a sealed record and more like plumbing: multiple side pipes, outside processors, and permitted secondary uses that patients rarely see.

Under current law, organizations may use and sell “de-identified” health data without notifying you or asking for consent. Providers and their vendors can legally profit from these records.

“De-identified” does not always mean anonymous. Large health datasets can often be linked back to individuals when combined with other available information.

Many consumer health apps are not covered by HIPAA at all and may collect and sell identifiable personal data.

This is legal.

This is common.

Common doesn’t mean acceptable.

Trellis was designed so your health information cannot be repurposed.

Your records are used only to deliver your care and your insights; not advertising, not datasets, not analytics markets.

How Trellis operates

- We do not sell or license your health data

- We do not allow use of your data for model training

- We prohibit secondary use by partners and vendors

- We do not provide your information to advertisers or data brokers

- Your data remains inside a private, HIPAA-secured environment

Not identified. Not de-identified. Not aggregated.

One purpose: your care.

Follow the money.

The business model is the privacy policy.

A company’s incentives determine how it treats your information.

Services funded by advertising or data licensing eventually optimize for advertisers, partners, or datasets. A health service funded by its members answers to its members.

Trellis is paid directly by you.

1. You subscribe to the software – at a reasonable price

2. You choose optional care & services – tests, clinicians, whole family care

3. We succeed only if the service is useful enough for you to keep using it

We do not run ads. We do not sell audience segments. We do not monetize behavioral or health data.

Our incentive is straightforward: build something reliable enough that you choose to return to it for your health decisions

If we break your trust, we lose our business. That alignment is intentional.

Your body should not have a business model

Health data is different from other data. It includes symptoms, mental health, reproductive history, medications, family conditions, and fears people may never tell anyone else - even a doctor.

When people think their information may be used against them, for advertising, profiling, or datasets, they change their behavior. They leave things out. They delay care. They avoid questions.

A health service cannot work if patients cannot be candid. Privacy is therefore not a feature. It is a clinical requirement. Trellis is designed so your information remains under your control.

What this means in practice:

- Messages are encrypted

- Conversations & records are never used to train models

- We do not sell, rent, or broker access to your health data

- Your data runs in a private, dedicated, HIPAA-secured environment

Delete means delete

Hard reset, for real.

Two taps in app.

Model deleted.

Memory gone.

No ghost copies.

No de-identified copies.

No training remnants.

No "for research" exception.

It's like erasing an iPhone, not just clearing your browser cache.

Your health intelligence.

Your health model belongs to you.

Most software stores users inside a central system. The company’s system learns, and individuals become entries inside it.

Trellis is designed the opposite way.

Your records, questions, and patterns shape a personal health intelligence that exists for your care, not as part of a shared company dataset.

Your Trellis Intelligence is informed by your history and your family context but it does not become part of a central “brain” owned by Trellis.

The system adapts to you.

You are not training the system.

Your private history, by default

Your memory stays inside your environment.

When you speak to many AI systems, the conversation becomes part of a shared training pool. The system improves by absorbing user histories.

In Trellis, your private health model is isolated and memory is provided through your private 4-layer memory system (raw conversations, memories, interpretations, model).

External models help generate reasoning, but they do not keep your context, your history, or your identity. Your conversations, lab results, and patterns remain inside your Trellis environment. They are used to understand you and not to teach the outside model.

The Trellis intelligence can remember you.

The external AI LLM cannot.

Private, useful and simple architecture, by choice.

Privacy enables truthful care, which enables better health.

This sits at the foundation of Trellis Health.